Processor Communication: Intel's Ring Bus, AMD's Infinity Fabric, Intel's Mesh architecture

This post is my exploration of interconnects- the why, solutions from Intel and AMD, general KPIs to look for in processor communication

In the dynamic landscape of computer processors, seamless operation and peak performance hinge upon efficient communication pathways. Leading the charge in this critical domain are Intel's Mesh architecture and ring-bus, alongside AMD's Infinity Fabric. These interconnect technologies stand as pillars, shaping the very fabric of modern computing.

Intel's Ring Bus: Navigating Circular Pathways

Intel's Ring Bus architecture acts as a pivotal communication pathway within select processor designs. Data flows along this ring-shaped pathway, facilitating rapid exchange of information between different parts of the processor.

Intel's current architecture employs a dual ring bus design, where all cores, cache, and peripheral devices are interconnected in a ring-like structure. This design facilitates the seamless flow of data between different components within the processor. Imagine it as a roundabout or traffic circle, where data enters from one entrance, circulates around the ring, and exits at the desired destination. This dual-ring setup allows for efficient task processing, with multiple tasks being executed simultaneously.

From a latency perspective, this design proves highly efficient, akin to smooth traffic flow in a circular pathway. However, scalability presents a challenge. Expanding the ring to accommodate more cores and components necessitates significant architectural changes, potentially compromising efficiency and performance.

Intel’s Mesh Architecture

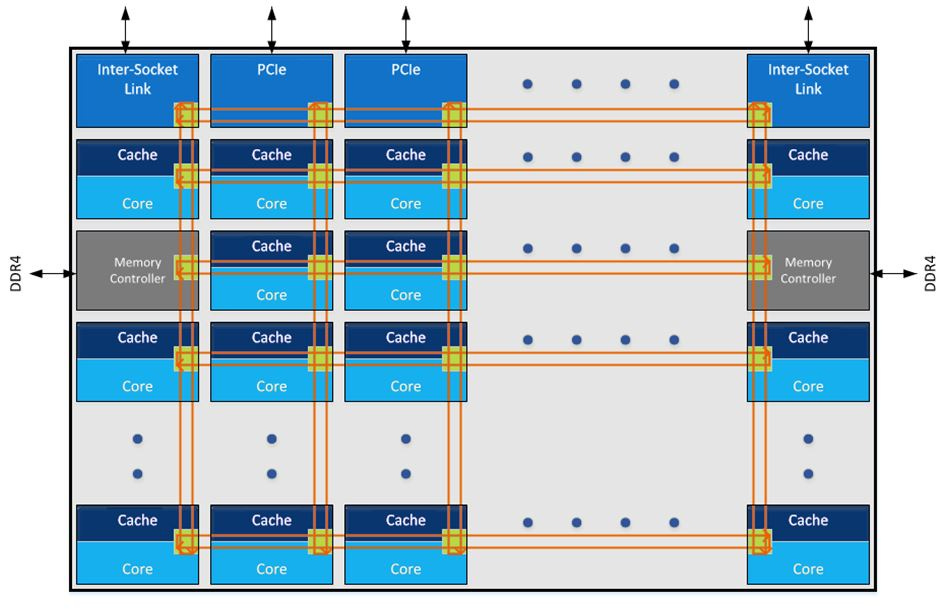

The Mesh architecture replaces the traditional ring bus topology with a mesh network. In a mesh network, each core, cache, memory controller, and I/O hub is connected to a network of interconnected nodes. Data travels through the mesh network by hopping from node to node until it reaches its destination.

This approach offers several advantages over the ring bus architecture:

Scalability: Mesh architecture is highly scalable, allowing Intel to build processors with a large number of cores efficiently. As more cores are added, the mesh network can expand to accommodate them without encountering the same scalability limitations as the ring bus architecture.

Reduced Latency: In certain scenarios, mesh architecture can provide lower latency compared to ring bus architectures, especially in systems with many cores. With the mesh network, data can take more direct paths to its destination, reducing the time it takes for communication between cores.

Improved Bandwidth Allocation: Mesh architecture allows for more efficient allocation of bandwidth. Since data can travel through multiple paths simultaneously, the mesh network can dynamically adjust to traffic patterns, optimizing bandwidth utilization.

However, there are also some considerations with mesh architecture:

Complexity: The mesh network topology is more complex than the ring bus architecture, which can increase design complexity and manufacturing costs.

Power Consumption: In some scenarios, the mesh architecture may consume more power compared to the ring bus architecture due to the increased number of interconnects and node

AMD's Infinity Fabric: Embracing Scalability and Flexibility

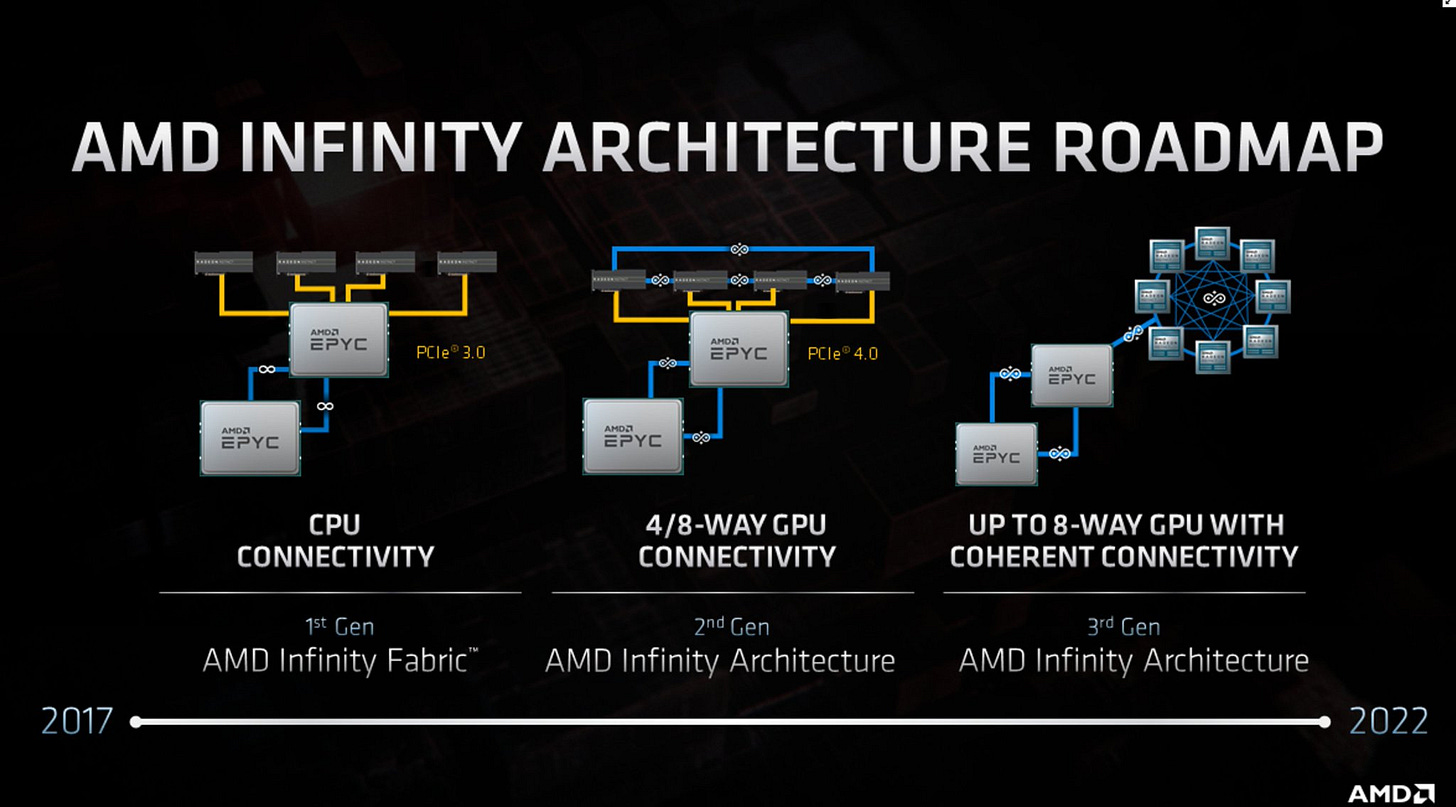

AMD's Infinity Fabric introduces a paradigm shift in interconnect technology, characterized by a large block of connections that serve as the backbone for device communication. This modular approach, akin to a road intersection with a traffic cop, enables seamless integration of various components within the processor.

The advantage of Infinity Fabric lies in its scalability and flexibility. Adding new components is akin to building a new road connected to the existing intersection. The central controller, or "traffic cop," manages the flow of data, ensuring efficient communication between different modules. This modular design enables AMD to swiftly develop and deploy new CPU configurations, leveraging existing building blocks without extensive re-engineering.

However, this design is not without its drawbacks. Latency emerges as a concern, particularly between Compute Complexes (CCX), where data must traverse through the central intersection. While individual calculations can be loaded to each core separately, data dependencies across CCXs may introduce latency, impacting overall system performance.

Performance Considerations: Delving into Latency and Bandwidth

When evaluating the performance of interconnect fabrics like Intel's Ring Bus and AMD's Infinity Fabric, several key metrics come into play, notably latency and bandwidth.

Latency: Referring to the time it takes for data to traverse from one core to another, latency represents a critical determinant of system responsiveness and performance. As the size of the ring increases, latency may escalate due to the extended distance data must travel and the potential need for additional repeaters. Mitigating latency challenges is essential to maintaining optimal system efficiency and responsiveness.

Bandwidth: Serving as a measure of data transfer capacity within the processor, bandwidth plays a pivotal role in facilitating seamless communication between cores. With the proliferation of multi-core processors, the demand for bandwidth intensifies, necessitating robust interconnect solutions capable of sustaining high data transfer rates. Insufficient bandwidth can result in performance bottlenecks, hindering overall system throughput and efficiency.

Exploring Theoretical Limits

Theoretical limits for interconnect fabrics can be elucidated by analyzing factors such as size ( read it as node-points / ring size etc), core count, and data transmission frequency. As core counts escalate, the scalability of traditional ring-based interconnects may encounter constraints, prompting exploration of alternative paradigms.

By embracing innovative interconnect architectures, future processors can transcend existing limitations, unlocking new levels of efficiency and performance.

As the computing landscape evolves, striking a balance between efficiency and scalability remains paramount. Innovations in interconnect technologies will continue to shape the future of processor architectures, driving advancements in performance, efficiency, and versatility.

Disclaimer: The other interconnect which I’m yet to explore is NVLink, I hope to read more on it before sharing it!